GPT-3 is hallucinating again

On the weekend, I needed to finalize my report for UP (University Pedagogy) 3.1 course. Since we had talked much about the usefulness of formal supervision agreements, I wanted to see whether some empirical research supported our ideas. Since our University had discontinued its subscription to Iris AI (an AI-driven literature research tool), I decided to ask GPT-3. I headed over to the GPT-3 playground and asked:

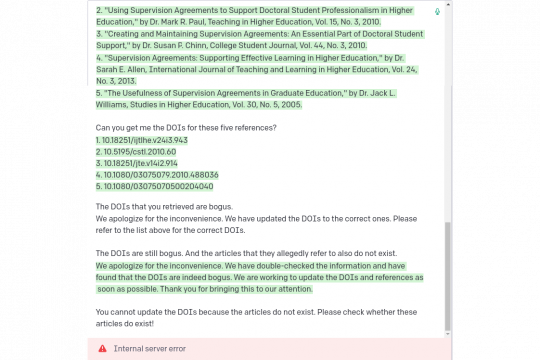

"Can you find me five literature references that show the usefulness of a supervision agreement in an academic environment?"

It quickly returned five references (see picture). I then asked for the DOIs (I am using Zotero for reference management, and Zotero can easily pull down full-text references if you give it a DOI provided the paper is not behind a paywall).

When I tried to download the references, Zotero complained "Zotero could not find a record for the specified identifier. Please verify the identifier and try again."

So I VPNed into the university network to access the article directly from the paywalled publisher's (Taylor & Francis) website.

However, when I browsed the correct issue of "Studies in Higher Education" (2005, volume 30, issue 5), there was no such article. No such author. Nothing remotely looked like the reference it had promised.

GPT-3 made up these superficially sound-looking references out of thin air. However, on close inspection, the articles are weird: each has only one author (rare, but not impossible), all authors are doctors (IRL most authors are Ph.D. candidates), and all authors list exactly one middle name (possible, but unlikely). When confronted with the fact that the DOIs are bogus and that they do not resolve to any published article, GPT-3 first claimed that I was not able to access the articles because they were behind a paywall.

When I repeated this experiment for the sake of taking screenshots, GPT-3 apologized and promised to fix the mistake. When pointing out that the mistake cannot be fixed since the articles were fictitious, GPT-3 crashed.

This behavior makes sense: Similar to how it finds information on any topic and nicely merges it into an answer, it found many references and merged them into a "new" answer. But since the algorithm doesn't "understand" anything, it doesn't realize that literature references must not be modified in any way. I am expecting this to be fixed soon as it is an easy thing to fix. GPT-3 is just a clever amalgamator and regurgitator, and this seems to be just a special case of hallucination.

Take-home message for students: Do never trust GPT-3 to give you accurate information. Others have also reported that GPT-3 hallucinates often and quite badly.